For a project to succeed, quality assurance (QA) and testing must be treated as one of the most important parts of custom software development.

However, this does not always happen. According to the 2023 Software Testing and Quality Report by TestRail, not having enough expertise, people, and time are top concerns among QA professionals:

Quality assurance is complex, and unfortunately, QA remains undervalued in many companies. It requires deep project knowledge, a vision of the expected outcome, and adherence to quality standards. Still, the results of quality assurance remain invisible until software becomes neglected and starts losing its edge.

1

What Makes QA Specialists and QA in Software Development So Important?

During development, myriad risks jeopardize the product’s success.

Issues emerge when actual results of a Sprint deviate from developers’, product owner’s, or end users’ expectations of how the program should work or act. Usually, the root cause is hidden in inconsistent project requirements, bugs or errors in the source code, or specifics of the target platform.

It is QA engineer’s task to identify, deal with, and prevent bugs throughout the software development lifecycle as soon as possible by:

- comparing deliverables to project requirements,

- studying software behavior on different platforms,

- finding and describing flaws, defects, and inconsistencies, and

- thinking about how to lower risks and avoid specific errors in the future.

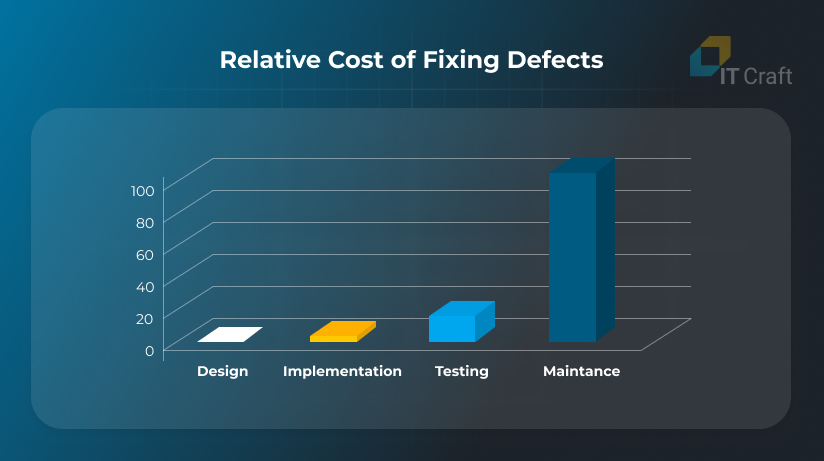

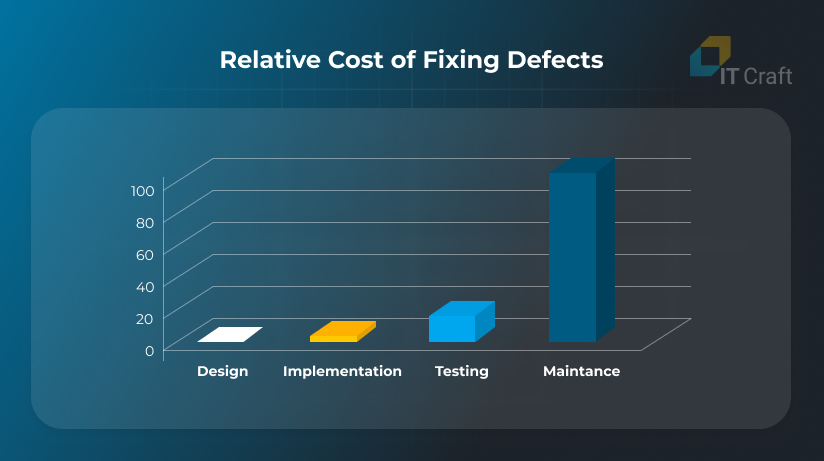

According to old but still relevant research on the cost of bugs throughout the development cycle, bugs discovered at the testing stage are 15 times more expensive than bugs discovered at the design stage:

- 47% of apps require more intensive testing than they receive during software development, making them too flawed and buggy to meet user needs and causing high abandonment rates

- 75% of mobile apps do not meet security standards

How does adding quality assurance to software testing increase a project’s bottom line?

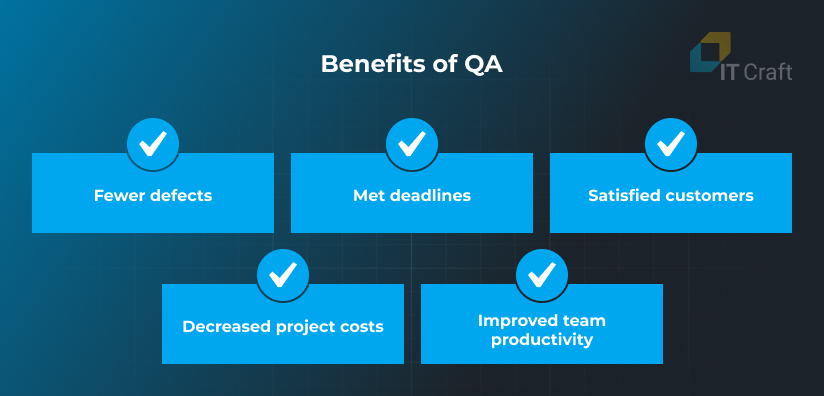

- Reduce the number of defects

Whereas developers cannot eliminate all bugs, QA activities detect bugs affecting software stability, especially severe defects that can lead to crashes, data leaks, etc.

- Decrease overall project costs

Fixing bugs at the requirements analysis and software development stages costs less than fixing bugs discovered in production. During requirements analysis and software development, fixing bugs requires less rework and therefore less of developers’ expensive time.

Thanks to QA, the project timeline remains within the estimated range while the delivered functionality integrates with the rest of the codebase without causing crashes.

- Increase the team’s productivity

Improved source code quality as the result of QA activities boosts performance of the entire project team. Developers can focus on adding new features, enhancing experiences, optimizing resource consumption, and so on while working with a stable, high-quality codebase.

On the contrary, when QA is insufficient, developers will spend much of their time on detecting and fixing emerging issues, which slows down delivery.

- Improve customer satisfaction

Customers will not forget and forgive bugs, freezes, and poor performance. QA helps software providers retain users who might switch to a competitor by providing a smooth experience.

2

Difference between Software Quality Assurance, Quality Control, and Testing

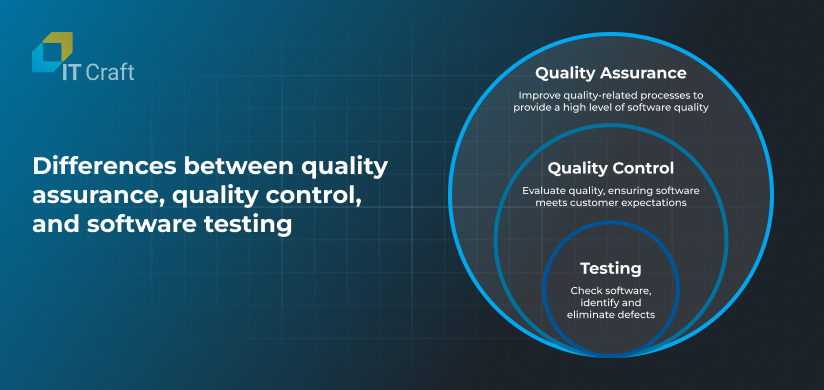

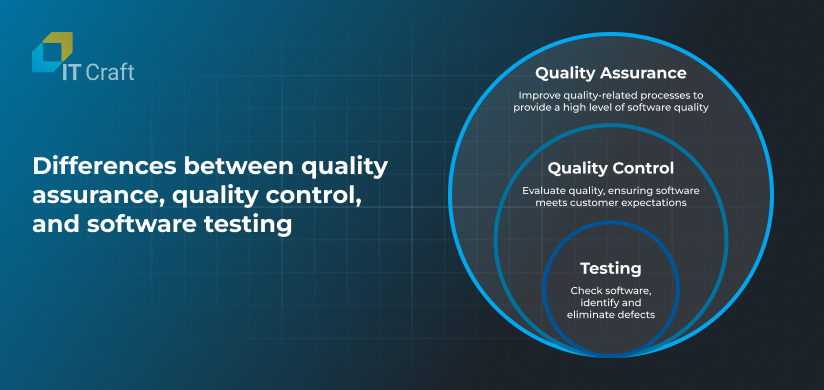

Quality assurance (QA), quality control (QC), and testing describe processes related to ensuring the high quality of source code. All three share the same goal, which may lead to confusion and interchangeable use of these terms.

However, QA, QC, and testing represent different aspects of quality management, with varying team roles, scopes of work, approaches, and required skills.

We analyze differences between quality assurance, quality control, and software testing below.

Quality Assurance

Quality assurance sets quality standards.

It also involves meeting quality standards and delivering software with as few bugs as possible. As such, quality assurance is broader than quality control and testing, emphasizing the organizational level of quality management.

QA focuses on creating and implementing guidelines, requirements, and procedures throughout the product development life cycle, which is essential for meeting stakeholders’ expectations.

It includes preventive activities at the requirements management, development, testing, and production stages, going beyond testing and controlling the quality of produced source code.

Key characteristics of QA:

- Type: preventive

- Objective: prevent defects; ensure the software meets quality standards

- Focus: processes and requirements

- Timing: throughout the entire development cycle, as an integral part of all product development stages

- Participants: external stakeholders, business analysts, project managers, QA engineers, and software developers

Quality Control

Quality control ensures compliance with set standards.

While QA generally focuses on preventing defects, QC concentrates on finding and fixing them. As such, QC is used to verify and validate the produced software and related processes, ensuring the source code meets established quality requirements.

QC engineers audit the project, checking if the team followed specific requirements, guidelines, and procedures while developing software. They examine differences between the desired and actual product, looking for how well the product fits the provided specification.

QC is needed to ensure stakeholders’ and end-users’ satisfaction.

Key characteristics of QC:

- Type: reactive

- Objective: identify defects; verify the quality of processes and the end product

- Focus: produced source code and its compliance with requirements

- Timing: after the team has established guidelines and requirements, and produced source code

- Participants: testing team or single QA/QC engineer

Software Testing

Testing ensures that bugs and defects do not go into a release.

As part of both QA and QC, testing is a narrowly focused activity that involves running code and checking if it works correctly.

Testing engineers examine software for bugs and defects, ensuring that produced source code meets technical requirements.

Testing is a continuous process that runs parallel to software development and includes multiple activities. These are crucial for verifying various software aspects, such as user load, performance on different devices, security, and communication between system parts.

Key characteristics of testing:

- Type: ongoing

- Objective: identify bugs and defects in the produced source code

- Focus: technical issues that can affect software performance and the user experience or block integration of new functionality

- Timing: part of Sprint activities alongside software development

- Participants: QA and test engineers; software developers

3

QA and Testing as Part of the Software Development Life Cycle

The software development life cycle (SDLC) refers to an efficient and cost-effective process needed to turn a client’s vision into a high-quality digital system.

The SDLC lets the development team:

- determine project challenges,

- address project risks efficiently,

- maintain high team productivity under changing requirements,

- systematically measure project deliverables, and

- meet end users’ expectations.

The six essential SDLC stages can vary in their implementation. Some stages can be completed consecutively or in parallel, depending on the chosen development model (Waterfall, Agile, Scrum, Spiral, etc.).

But regardless of the development model, quality assurance, quality control, and testing play an important role in each stage of the software development life cycle.

Let’s take a closer look:

Analysis and Planning

This stage defines the project’s success. The software development team discusses the project vision with the client and prepares a plan that includes goals, scope and timeline estimates, expected deliverables, the definition of done, and more.

QA engineers review and validate the project plan and requirements to detect inconsistencies and defects as early as possible. They also identify test cases and start working on a testing plan that ensures it meets project requirements.

Software Architecture Design

During the software architecture design stage, the team determines the best solution for a secure and scalable software architecture. It decides on frameworks and modules to boost development, the database architecture, and software infrastructure.

QA engineers participate in discussions and review technical project decisions to avoid system and database architecture flaws and identify possible security vulnerabilities and future problems with scaling.

Implementation

The development team works on the software codebase. It customizes frameworks, develops new functionality, and integrates third-party modules according to client needs. Usually, teams work in fast-paced iterations to ensure flexibility in meeting clients’ changing needs.

Software developers write unit tests to check if the produced code works correctly.

Testing

As a rule, testing is part of the implementation process and runs simultaneously with software development. The QA/testing team examines the source code as soon as it is ready.

QA engineers focus on code review to discover logical errors, deviations from standards, and possible security issues. They apply different types of testing to simulate real user behavior and check how the software responds. User acceptance testing is important to ensure software meets client requirements.

Deployment

Users work with software in a production environment, separated from development and testing environments. The development team must seamlessly update the software in the production environment while providing end users with 24/7 access to required functionality.

QAs participate in post-deployment testing to ensure the software works as expected on live servers.

Maintenance

The dedicated team monitors live software, ensuring its constant uptime. They also make necessary updates and take care of emerging issues. In addition, the monitoring team can collect user feedback and transfer it to the development team.

Some bugs and flaws become visible only in the live environment. This is why it is crucial that the QA and testing team receives user feedback, identifies issues, and reproduces them, helping developers improve the user experience.

Additionally, the QA team works on process improvements, helping to automate tasks and prevent recurring issues.

4

Six Steps of the Software Testing Life Cycle

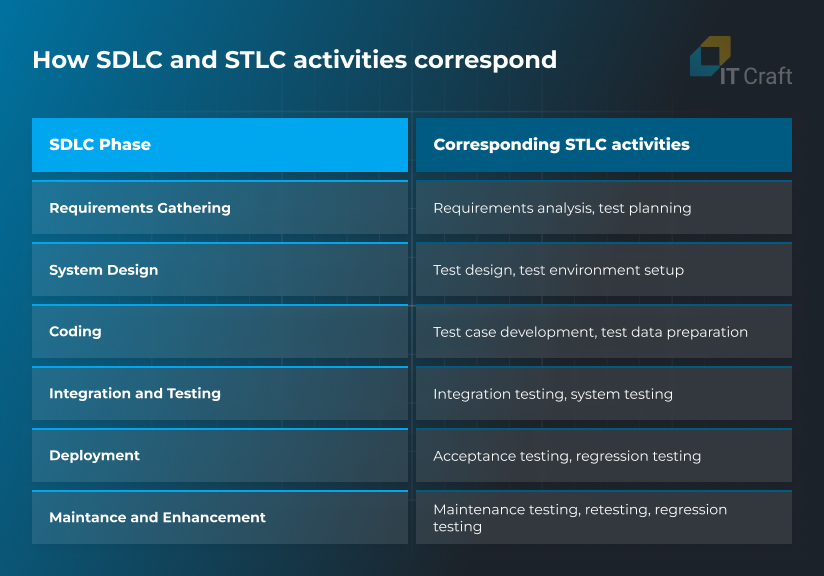

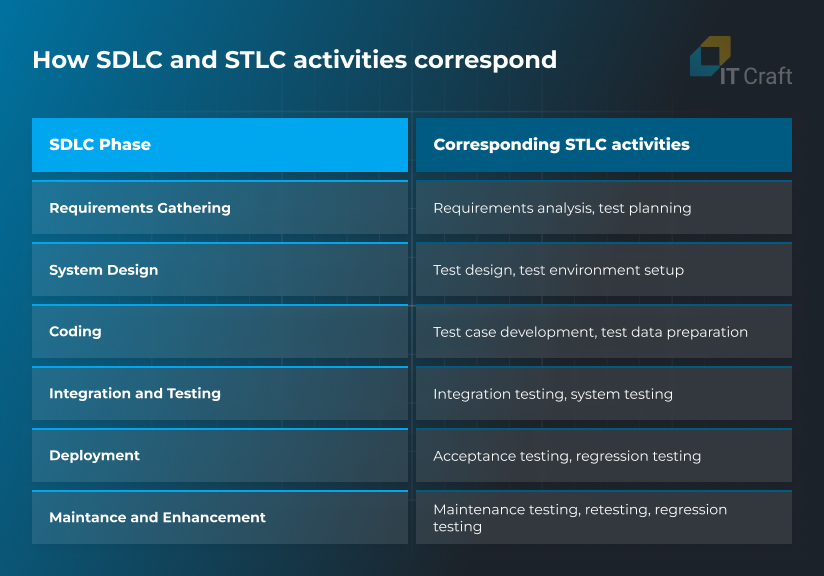

The software testing and QA team follows a software testing life cycle (STLC), focusing on software quality assurance and testing responsibilities.

To execute all necessary QA/QC/testing activities, the STLC includes six steps:

- Test analysis

- Test planning and control

- Test case development

- Testing environment setup

- Test execution

- Cycle closure

This workflow covers the entire process needed to efficiently improve software quality.

It’s worth noting that the STLC is part of the software development life cycle and requires tight coordination between developers and QA engineers. The feedback loop is key to maintaining software quality at all stages.

Here is how SDLC and STLC activities correspond:

This is what IT Craft’s QA specialists do throughout the STLC:

Step 1: Requirements Analysis and Test Planning

During requirements analysis and test planning, the quality assurance and testing team plans what it will test within the project and how.

The team works on a testing strategy that ensures the best value for the project, studying available specifications and requirements to identify testing objectives and prioritizing specific activities.

For instance, QA engineers decide on testing approaches, types (functional, non-functional, smoke, black box testing, etc.), and manual and automated testing requirements.

After the team has prepared a strategy, they turn it into a detailed testing plan to harmonize their workflow with the rest of the project team.

The plan contains a prioritized schedule for test implementation, execution, and evaluation. QA engineers define specifications of test activities and estimate the resources needed to implement planned activities.

The team also must document future steps in a test plan. To ensure successful test completion, QA engineers define testing activities, related details, test structures, and templates for test documentation.

Improving testing is a continuous activity, so the team should regularly update the test plan. Testing engineers must document changes in testing throughout the entire software life cycle to avoid a drop in productivity for the whole project team.

Main requirements analysis and test planning activities:

- Create a testing strategy

- Determine testing approaches and activities

- Write down priorities, schedules, specifications, and estimated resources in a test plan

- Update the test plan

Step 2: Test Case Design and Development

After the testing strategy is ready, the testing team starts developing test cases. They review the test basis (which can be a system requirement, technical specification, source code, or a business process) while evaluating the testability of the entire system or its individual components.

Balance is key to success at this stage. On the one hand, test cases must be extensive, covering as many use cases as possible and, ideally, the entire code base.

On the other hand, QA engineers must stay within budget and timeline limitations. This is why they should identify what test cases and conditions to prioritize while skipping others that are rare and not explicitly mentioned in the software specification, such as support for older browser versions.

This way, the team designs and prioritizes high-level test cases, describing how to check specific functionality including preconditions, actions, and expected results.

The quality assurance and testing team must constantly review and update test cases to ensure relevance.

Main test case design and development activities:

- Evaluate the test basis and test objects

- Design and prioritize test cases

- Assess conditions, actions, and expected results

- Keep test cases in good order

Step 3: Testing Environment Setup

Unification of development, testing, and production environments is crucial for software quality. A bug may not be reproduced in a developer’s environment, but the app may crash on a tester’s or user’s machine — or cause constant freezes.

The QA and testing team must decide with the rest of the project team on which user environments the software should be adapted for: the minimum amount of RAM, supported browsers and operating systems, and more.

QA/testing engineers also identify required infrastructure, frameworks, and software tools for executing test cases, reporting, and tracking defects.

DevOps engineers ensure that the entire project team uses the same environments. Still, QA engineers need to supervise the process, ensuring that development, testing, and live environments remain identical.

Main testing environment and setup activities:

- Determine environment requirements

- Decide on the required hardware, framework, and testing tools

- Set up the test environment

- Track the consistency of development, testing, and live environments

Step 4: Test Implementation and Execution

To ensure flexible software development and meet changing client needs, the quality assurance and testing team makes necessary adjustments to its testing plan and procedures. They finalize, implement, and prioritize test cases and organize them into test suites for convenience.

In this step, QA engineers develop and prioritize test procedures. They create test data, which should approximate actual user data, and prepare test automation scripts when needed.

When part of the software functionality is ready, QA/testing engineers start executing test cases. They log outcomes and compare actual results to expected results, reporting any discovered problems and deviations in software behavior. The testing team then passes this information on to developers, detailing the severity of each issue.

After developers make the necessary fixes, regression testing ensures the problems do not repeat.

Main test implementation and execution activities:

- Execute tests

- Compare actual and expected results

- Discover and report defects

- Conduct regression testing to close issues

Exit Criteria and Reporting

Just as organizations can never gather exhaustive project requirements — some things remain out of focus and others change quickly, leaving businesses in an endless planning loop — it is impossible to fully complete software testing.

Still, there are limits beyond which further testing stops bringing the desired value. These limits are called “exit criteria.”

The development team identifies exit criteria during the planning stage. They can include:

- no remaining priority bugs and defects,

- no violations during the test run,

- stable system in common user environments,

- successfully passing User Acceptance Testing,

and more.

The team checks test logs against specified exit criteria. Once the software meets them, the team quits testing and sends the software to the deployment or production stage.

Responsible QA engineers write a test summary report for project stakeholders and discuss in a retrospective meeting what issues they encountered and what the team could improve.

They can also assess if more tests are needed or update the testing plan if exit criteria need to be changed.

Main exit criteria and reporting activities:

- Check test results against exit criteria

- Move software to further stages

- Write reports

- Discuss areas for improvement

Cycle Closure

Testing reaches the closure stage after the end product is ready and shipped to the client. At this point, the QA/testing team compares the initially planned deliverables to actual deliveries.

Team members analyze whether they reached all goals regarding test coverage, timeline, and source code quality. They also analyze the difference between estimates and actual time expenditures and how estimates can be improved in the future.

Additionally, the team prepares the required testing deliverables, which the client receives when the team transfers the project code base and documentation to them. Testing deliverables include:

- test documentation,

- finalized and archived testware,

- testing environment, and

- testing infrastructure.

Main cycle closure activities:

- Compare plans and deliverables

- Evaluate the testing workflow

- Document system acceptance

- Finalize and archive testware

5

Common Software Testing Concepts and Categories

Complexity is one of the key characteristics of the testing process. Quality assurance and testing engineers use 100+ testing types to check different software parts, system states, and responses.

Here are some important ideas that you need to know to better understand quality assurance and testing:

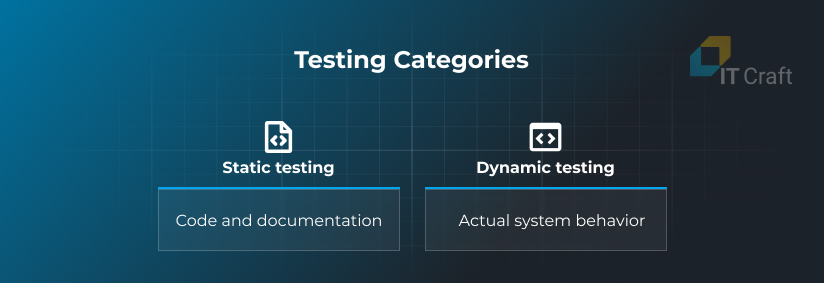

Testing Categories

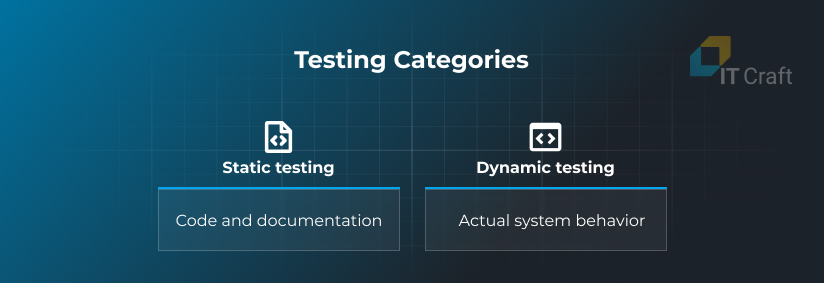

All types of testing fall into one of two broad categories: static testing and dynamic testing.

Static testing does not involve executing tests or running software. What it does involve is code review, code inspection, and documentation review. By helping to discover issues before code goes live, static testing eliminates them at the lowest cost.

Dynamic testing requires running actual tests and examining software behavior against testing scenarios. It takes most of the QA and testing team’s time, allowing the team to discover issues that could happen in the real world.

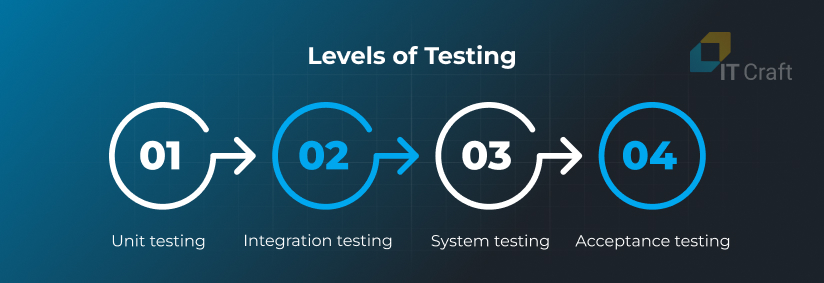

Levels of Testing

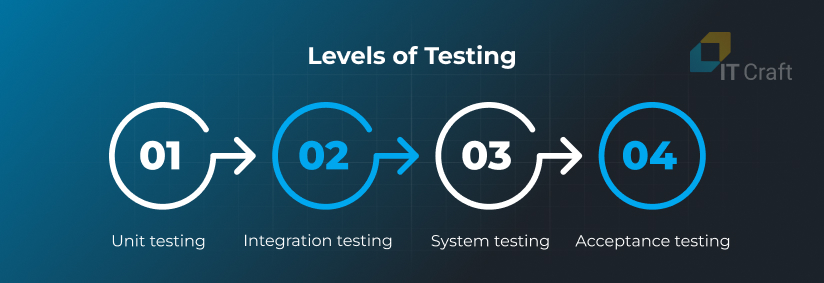

Software testing occurs at four consecutive levels, from a single component to the entire system with all its parts and interdependencies.

Unit testing examines the smallest parts in isolation, such as functions, procedures, or modules, to ensure they work as expected and produce valid results.

It is a developer’s task to prepare automated unit tests. Development platforms and frameworks usually provide tools for unit testing to simplify this activity.

Integration testing is the next step, when developers add newly produced source code to the project codebase. At this level, the team must ensure that the system runs and responds smoothly and that new code does not affect existing system components.

Both software developers and QA engineers work on integration testing.

System testing is used to examine the system as a whole. The testing team checks the system’s compliance with specification requirements regarding functionality and performance.

Acceptance testing, or user acceptance testing, verifies the system from the end user’s perspective and how it aligns with business requirements.

Usually, end users or their representatives perform acceptance testing. They test functionality with which users directly interact, ensuring it meets their needs.

Testing Techniques

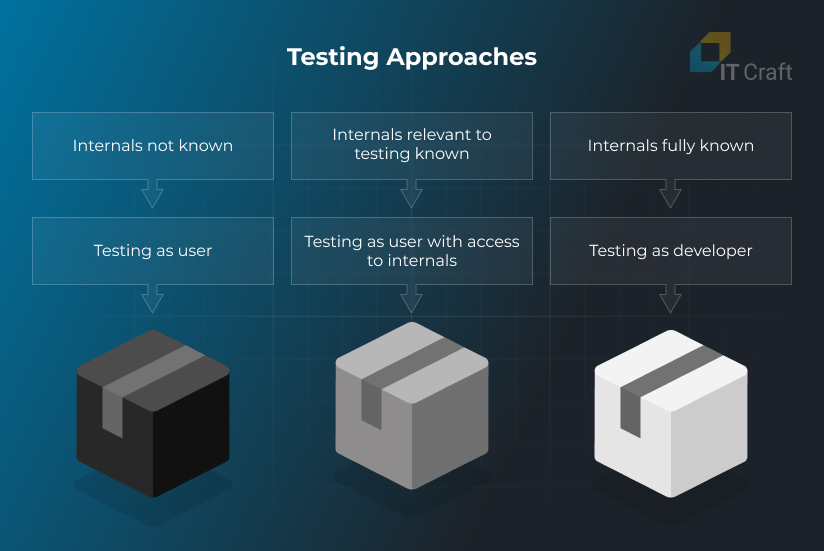

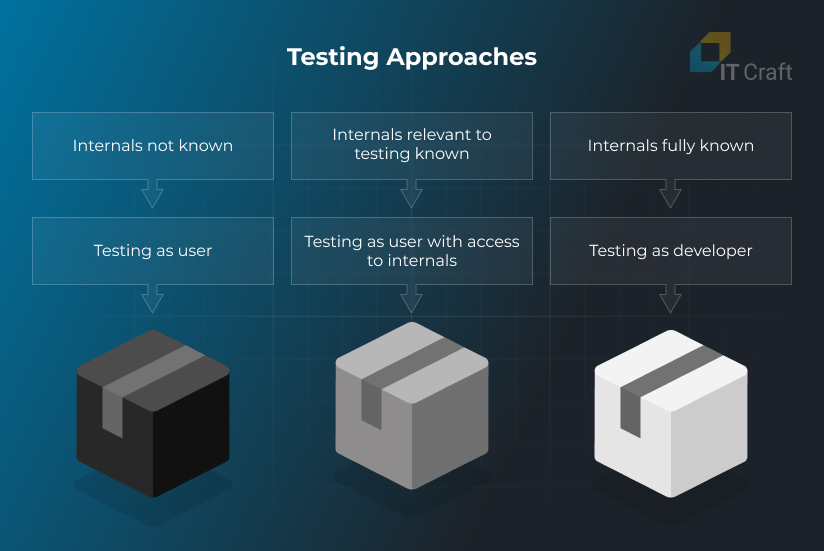

Three common testing techniques include:

- Black box testing

- White box testing

- Gray box testing

Black box testing is used when the tester has no prior knowledge of software structure or logic. It focuses on how software runs and behaves when end users experience it.

White box testing is used to improve the system’s source code and requires knowledge of the system logic and components. In white box testing, QA engineers and developers focus on finding bugs and vulnerabilities, optimizing code, and fixing poor coding practices.

Gray box testing is a mix of black box and white box testing, in which testers have incomplete information. It is used to find bugs and defects in the system that will not look like bugs from an end user’s point of view.

Software Testing Models

Depending on project priorities and limitations, the team needs to choose a software testing model or mix some aspects from several models within one approach.

Some models help meet an aggressive deadline, while others work best for incomplete and changing requirements. Others save costs on simple projects with a clearly defined scope.

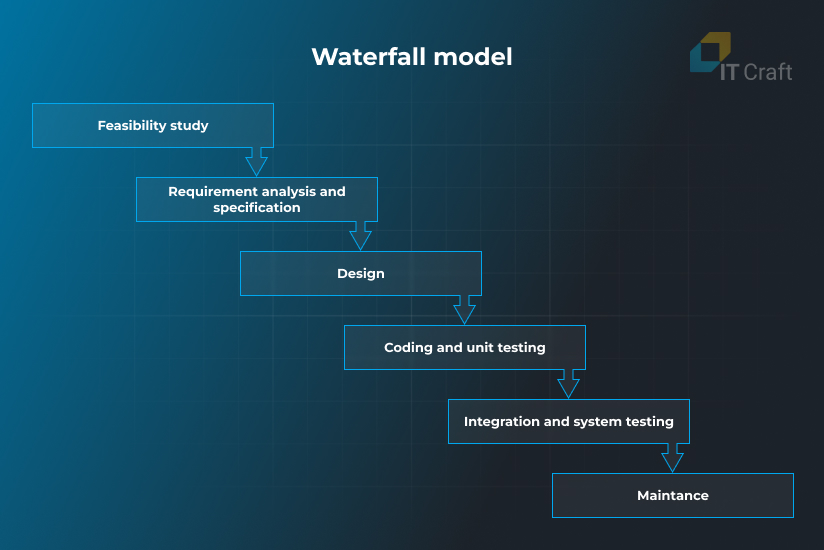

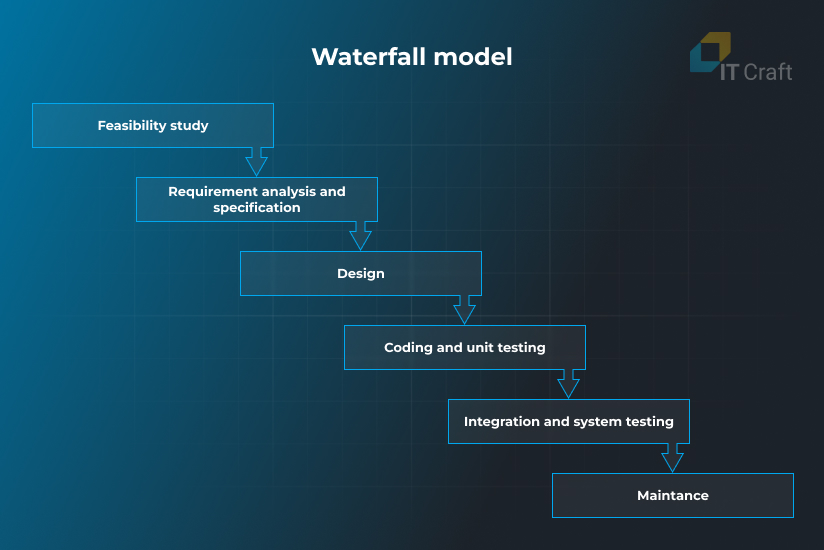

Following the Waterfall model, the project team identifies all project requirements and prepares a comprehensive plan that cannot easily be changed.

After each phase is completed, the testing team performs the testing activities in the project plan.

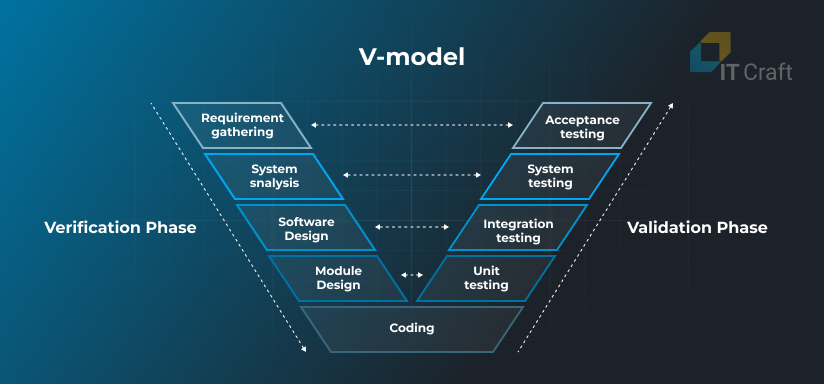

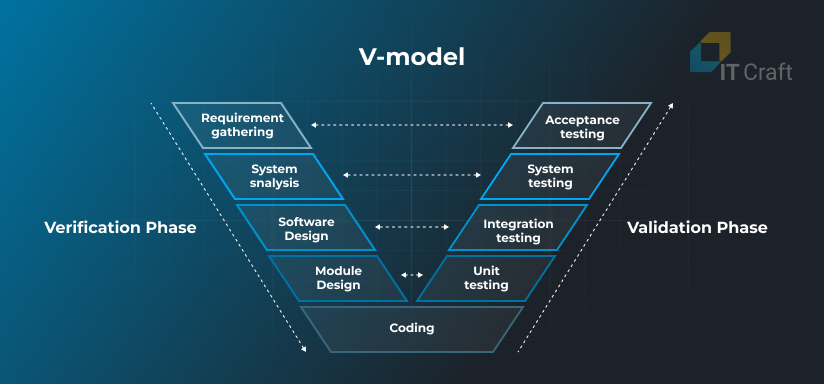

In the V-model, development and testing activities occur simultaneously, making it more flexible than the Waterfall model.

Testing starts at the unit level and expands to the integration, system, and acceptance levels. Each testing stage corresponds to a relevant development stage, enhancing collaboration inside the project team.

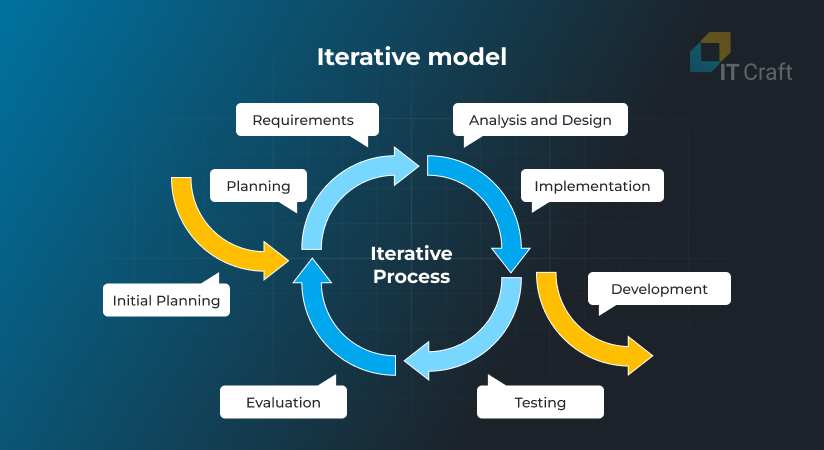

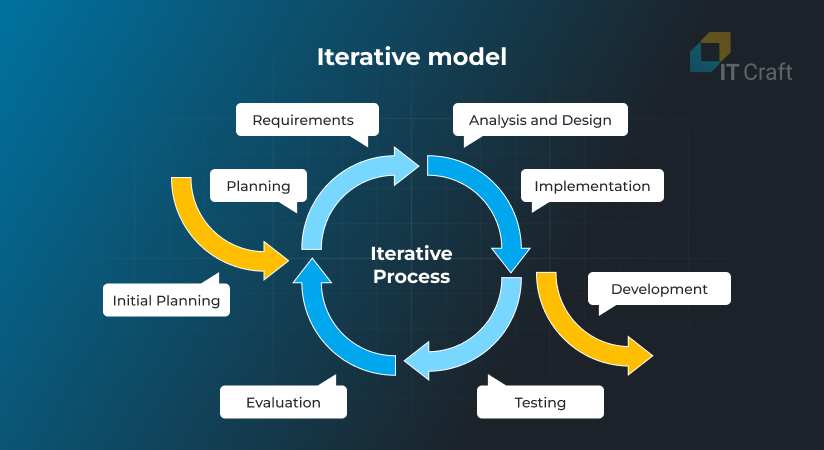

With this model, the project team completes requirements in numerous Waterfall iterations, which it uses to deliver and improve a primary product version — software that users can start using but that requires further elaboration.

The project team provides continuous improvements to create an optimum product, while the testing team examines changes and incorporates them into the system.

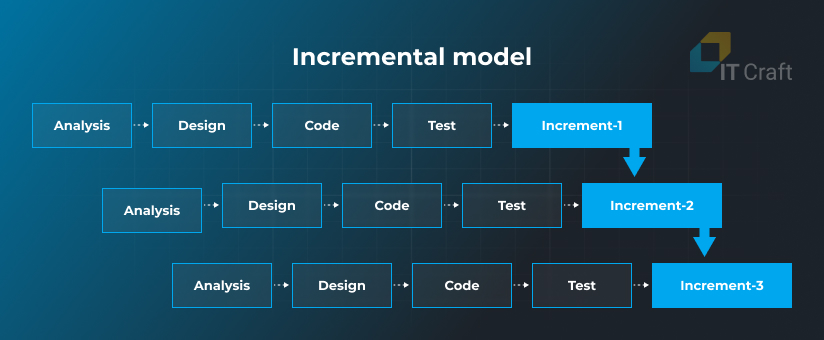

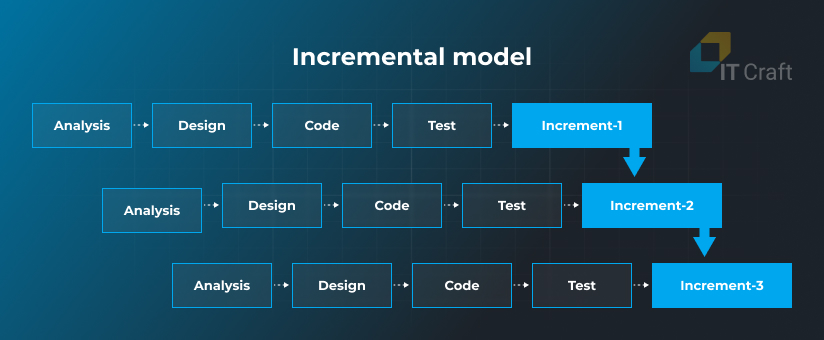

The incremental model is similar to the iterative model in terms of repetition. However, in the case of incremental development, the project team breaks down the scope into small parts (or increments) and delivers them one after another in short cycles.

Each cycle includes planning, design, development, and testing, which are performed simultaneously, allowing for quick product delivery.

In test-driven development, test engineers prepare automated tests for the source code before they start writing it. Then, developers prepare code that will pass the tests.

Test-driven development provides insights on how developers should write code, saving time on identifying and fixing bugs.

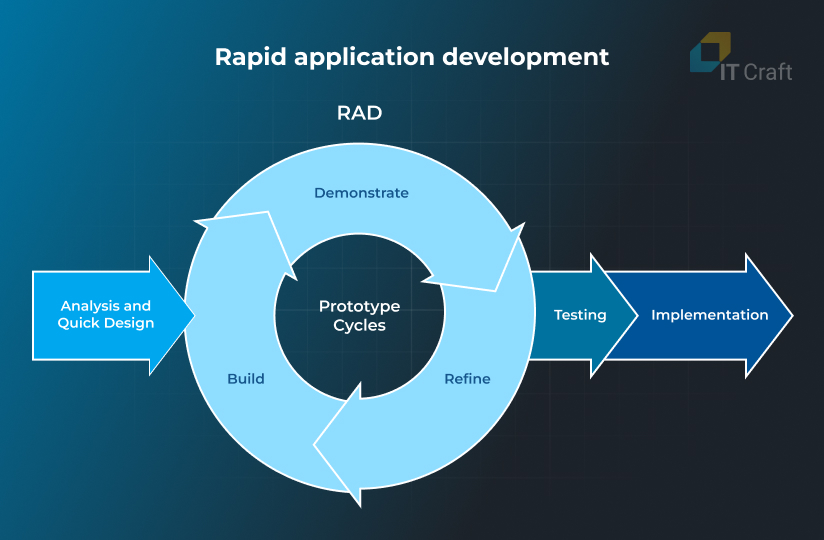

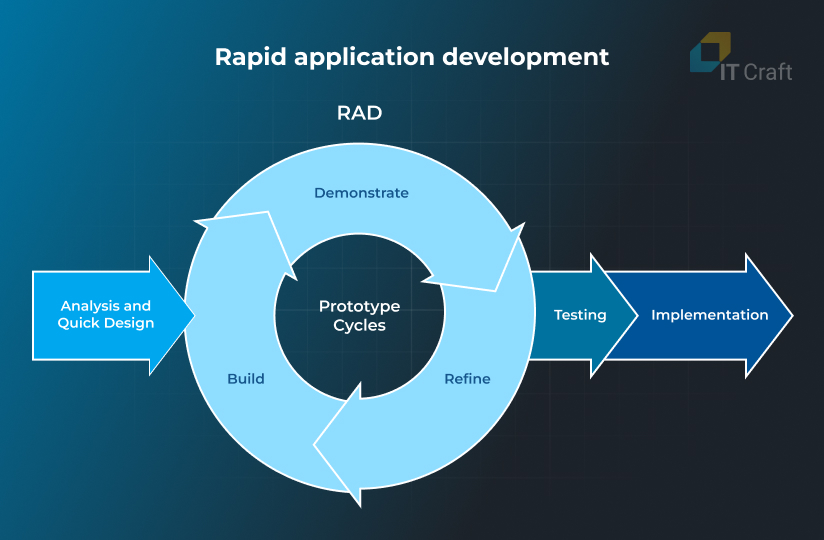

- Rapid application development

Rapid application development (RAD) supports delivery of a running project within a limited timeline when a client has only a vision. Its distinctive features are minimum planning and constant iterations based on user feedback.

Testing becomes an ongoing process throughout the development cycle. Also, active user involvement in development and testing is crucial for success.

Need help to boost software development?

Let’s discuss your concerns and design an implementation path that increases your bottom line.

Contact us

Types of Problems

People can use the words error, defect, bug, failure, fault, issue, and flaw synonymously, but they are slightly different.

- An error is something a developer has done wrong in the source code. Errors may or may not affect the system.

- A defect is an error that is found during the development stage.

- A bug is an error that testers find. (Note: An unidentified error remains an error.)

- A failure occurs when the system does not meet requirements.

- A fault is an error that has reached the production stage and caused a failure.

- An issue is any type of problem that disturbs a user or slows down developers, even if the implementation was according to the specification.

- A flaw is an issue in the software architecture or overall system design.

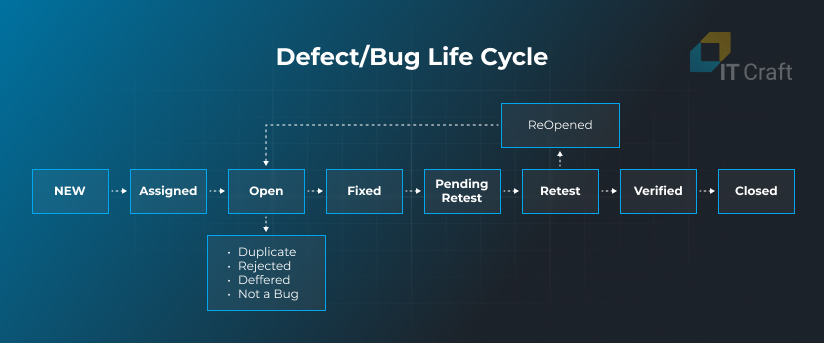

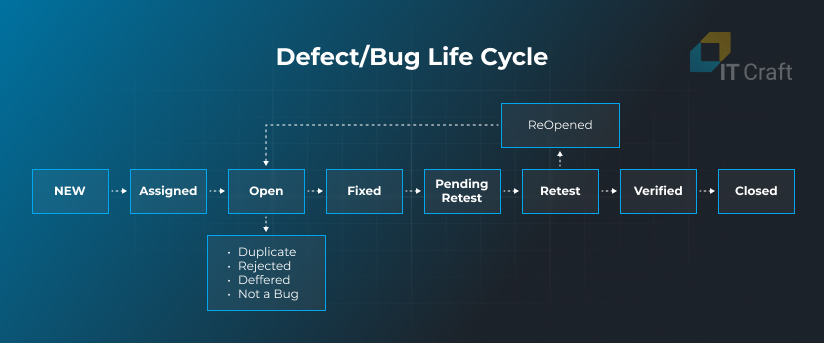

Defect/Bug Life Cycle

The defect/bug life cycle ensures the project team’s coordination when working on quality improvements. It is crucial for tracking statuses and addressing issues based on their priorities.

Source: Guru99

Bugs receive the following statuses throughout their life cycle:

- New: A tester detects a bug and adds it to the tracking system.

- Assigned: The testing team lead approves the bug, prioritizes it, and assigns a ticket.

- Open: The developer starts working on a fix.

- Fixed: The developer marks the bug as fixed.

- Pending: The developer passes the fix to a tester.

- Retest: A tester rechecks the fix.

- Verified: If the bug does not reappear, the tester marks it as verified.

- Reopened: If the bug keeps appearing, the tester sends it for another fix.

- Closed: The tester closes the ticket.

However, a developer may not work on a bug because the bug can be:

- Duplicate: The tester has described a known problem.

- Rejected: The bug does not affect anything.

- Deferred: Developers can push non-priority bugs into the next iteration.

- Not a bug: It is how the system is actually supposed to work.

Test Data

The QA and testing team must create and select representative data sets for test execution. Real-world data sets work best, but they can be supplemented with synthetic (generated) data when real data volumes are small, or when data is incomplete or biased. Please note that real data must be depersonalized.

Test data needs to include the following types:

- Blank data – check how the software functions when users leave unfilled fields.

- Valid data – see if the system processes and stores relevant data correctly.

- Invalid data – test how the system responds to unusual or unexpected inputs.

- Boundary data – ensure correct processing of rare boundary values.

- Wrong data – verify handling of wrong or irrelevant data.

6

Most Common Software Testing Types

Various types of testing are needed to examine even the most complex software requirements.

While it’s impossible to assess them all within a short overview, let’s focus on the most important types:

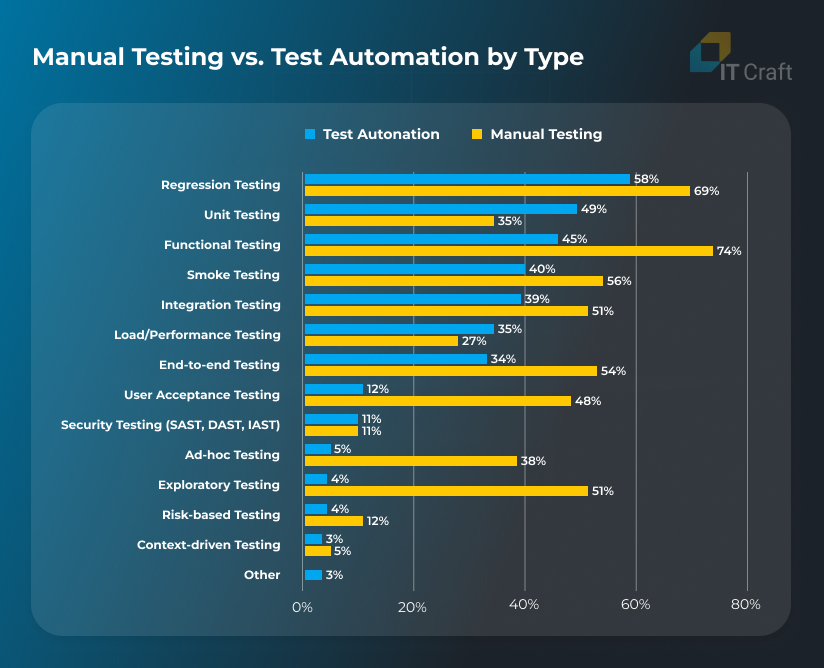

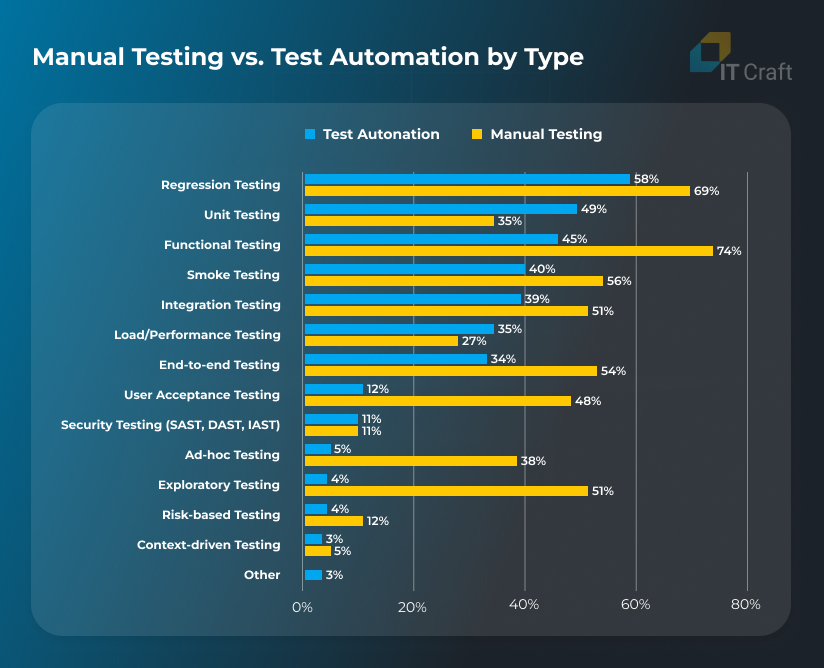

Manual Testing

Manual testing means that engineers check software manually, simulating how end users interact with it to identify problems, defects, or issues with the user experience. No specific tools are required to perform manual testing.

Automated Testing

Engineers check source code using pre-scripted tests. Automated testing reduces the costs of testing activities and eliminates human errors. Some development skills are required from testers to prepare automated tests.

Source: 2023 Software Testing and Quality Report

Functional Testing

Functional testing examines whether software can handle the expected range of tasks under specific conditions. Testers perform high-level testing of how well software performs under the main user scenarios.

Smoke Testing

This is often the first testing step after part of the functionality is ready. Smoke testing briefly checks basic functionality to ensure it works and is stable enough for further testing.

Ad-hoc Testing

Ad-hoc testing is an informal, improvised activity that does not require a plan, documentation, or user scenarios. Testing engineers follow their intuition. Still, a general understanding of the product or its competitors is necessary.

Exploratory Testing

Exploratory testing is a structured process where testers check software and prepare test cases simultaneously. It helps the testing team collect knowledge in early project stages and improve the test design.

Context-Driven Testing

Context-driven testing focuses on simulating real-world users and their habits. Testers focus on how people might actually use the software, even if it is far from best practices.

Non-Functional Testing

Non-functional testing addresses vital non-functional aspects that could hinder user experiences, including performance, reliability, stability, security, and so on. It’s important to note that non-functional testing must be measurable.

Positive Software Testing

Positive testing ensures software works as expected under predetermined conditions. Testers provide valid inputs and/or data, checking if the system responds properly.

Negative Software Testing

During negative software testing, testers check software responses in negative scenarios, such as with unexpected user behavior, invalid input data, technical challenges, or third-party attacks. This check aims to prevent software from crashing.

Usability Testing

This type of testing evaluates the user experience. It aims to examine ease of use, navigation, activity completion time, and more. During usability testing, the team collects and analyzes feedback from users along with in-app data on user sessions.

Security Testing

Security testing evaluates software security mechanisms, identifying system weaknesses and vulnerabilities. Comprehensive security testing is critical for industries working with sensitive data, such as healthcare, fintech, and ecommerce.

Performance Testing

Performance testing provides information about website or app speed, stability, and scalability under predetermined workloads. Testers check the system’s response to both anticipated and extreme conditions.

Compatibility Testing

Compatibility testing aims to reveal how software performs on different operating systems, in different browsers, under different network conditions, or using specific hardware. Because users can work from a myriad of environments, it is crucial to shortlist the most important combinations.

End-to-End Testing

The testing team verifies the software flow from start to finish to ensure the software system and interconnected systems work as expected and provide an uninterrupted user experience.

Risk-Based Testing

The team assesses the probability of risks based on a software product’s complexity, role in business processes, frequency of use, and more. It addresses aspects with the biggest impact and probability of defects.

Mobile Testing

Mobile testing is complex due to screen and operating system fragmentation, regular operating system updates, and various hardware configurations — in particular, for the Android platform. Testers can use emulators to cover as many devices as possible. Still, testing on real devices is preferred because emulators may not reproduce certain issues.

Regression Testing

Regression testing is critical for large, long-term projects. Testers re-run automated tests each time developers make changes in the codebase to see if the new code affects existing tests, simplifying troubleshooting.

Recovery Testing

Making backups may not be enough for successful disaster recovery. Each team needs to design and test a recovery plan to ensure their backup works and will help in an emergency.

Localization Testing

Every local version of the website or app needs to be thoroughly checked. Testers examine if software displays specific characters, has a natural-looking layout, uses the correct data and time formats, and contains appropriate cultural references.

7

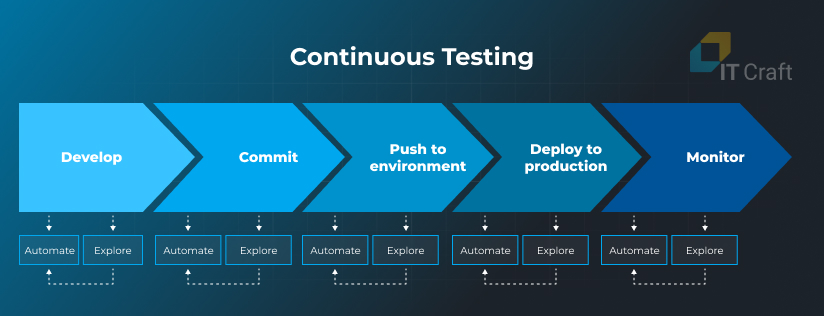

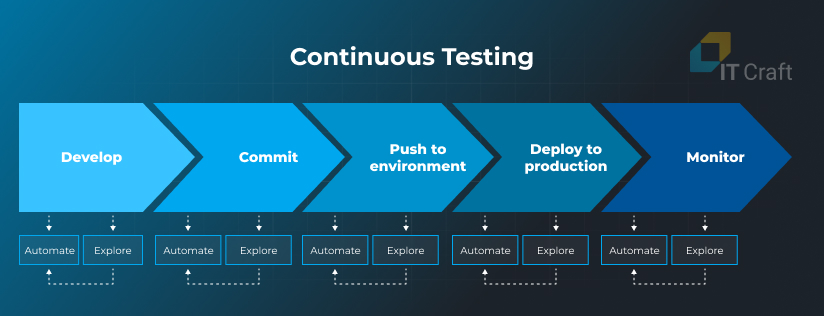

Role of Test Automation in the CI/CD Pipeline

Automated testing is an essential activity within the DevOps approach, which development teams use to build a CI/CD pipeline and save clients’ resources.

Test automation is a key part of continuous testing — an ongoing process that starts every time developers need to make changes to source code.

Once software developers are ready with a piece of code, they send it for an automated check that includes:

- regression testing,

- integration testing,

- performance testing, and

- other types of testing depending on requirements.

After the source code passes tests, it moves for a further manual check, if required, or to the integration stage.

Continuous testing removes the bottlenecks of endless manual code uploads and checks. It enables the project team to decrease deployment time from hours to minutes, making possible multiple releases per day.

As a result, the team introduces feature updates, security patches, or fixes without delay.

Here are the benefits of using continuous testing within the CI/CD pipeline:

Software testing becomes efficient, while software developers do not need to wait until somebody checks their code and provides feedback. Team performance increases, and the team can ship more deliverables within the same timeline compared to teams that do not use the CI/CD pipeline.

The new source code goes through multiple checks, while developers receive insights on what they need to improve before their code goes live. This eliminates situations when updates negatively affect the existing codebase.

Quick feature delivery and an optimized testing workload decrease project development costs. Also, indirect costs caused by downtime or unstable work are eliminated.

- High customer satisfaction

Automated testing allows for simulating and checking multiple use case scenarios to eliminate glitches and inefficiencies in the user experience. As a result, end users get an improved product they enjoy using.

Reduce your update and maintenance costs

IT Craft’s QA and DevOps experts can help you increase stability and reduce waste of server resources in three steps. Let’s discuss your concerns.

Contact us

8

Can a Software Development Team Work without QA Engineers?

They can for simple projects. However, in our experience, it makes little sense.

Eventually, you will conclude that you need to carve out a separate role or team that focuses on QA responsibilities and has a different outlook on software.

Here is what makes QA engineers an important part of a project team:

- Work on testing strategy, planning, and execution

Even if you don’t have a dedicated QA team or expert, you must organize an efficient testing workflow. Assigning this task to someone who has expertise in and a passion for testing is always wise.

- Focus on potential issues

Just as software developers think about how the software architecture will support future changes and scaling, QA and testing engineers concentrate on discovering potential issues from various perspectives, including those which developers may not consider.

- Get an independent outlook

Developers need a fresh outsider’s look at the source code to help them identify and eliminate bugs, non-compliance with requirements, or potential vulnerabilities.

By identifying issues quickly and early, QA engineers save the team’s resources and the client’s money. Test automation helps make expensive post-deployment fixes rare.

- Improve the customer experience

Software developers focus on the customer’s perspective, ensuring the software is user-friendly and meets both business requirements and user expectations.

Developers maintain high productivity when they do not switch contexts from development to testing responsibilities.

To highlight the importance of QA on a software development project, let’s consider common misconceptions about quality assurance and testing:

For successful QA and testing completion, engineers must combine a deep understanding of user needs and project goals, knowledge of testing methods and techniques, and a passion for quality.

- Quality assurance prevents all errors

The hard truth is that QA cannot prevent all defects and issues. Some remain unnoticed, making QA an unending activity. However, testers find and eliminate issues that critically affect system stability and user experiences.

- Testing can be 100% automated

Automation is only an instrument that helps testers do routine tasks that lead humans to become tired and start making errors. It cannot replace manual testing when a QA uses acquired expertise to check unexpected scenarios or look for non-trivial issues.

- Quality assurance is expensive

Testing costs are part of the overall investment in high-quality software development. Saving on QA is a false economy that has led to the collapse of many projects due to delivering a product that is impossible to use.

9

Quality Assurance Software and Tools

For manual testing, QA engineers usually do not require specific testing instruments. They can use common apps such as Google Docs for designing and updating test plans and use management software like Jira for test tracking.

On the contrary, the testing team can choose from diverse automated testing instruments, which they can combine and integrate. To name a few:

- BrowserStack lets engineers test app compatibility with different browsers and environments.

- Selenium is a browser automation framework that includes several web testing instruments for regression, cross-browser, API, and other types of testing.

- Apache JMeter is primarily used for performance testing, but it also can be used for unit testing.

- SpecFlow tests the behavior of .NET applications, simplifying code refactoring and later acceptance testing.

- The nUnit and TestNG frameworks automate unit testing.

- Postman and SoapUI help design and maintain automated API tests.

- Jenkins is a DevOps tool that is used for continuous testing and integration to ensure source code goes through the required steps.

- Jira is used by the project team to track task statuses, including those of bugs and defects.

- Slack ensures efficient communication between all project participants via channels and direct messages.

10

QA and Software Testing Trends

Software development, software testing, and quality assurance are undergoing substantial changes that allow the project team to improve the software delivery workflow and increase QA productivity.

Here are some of the essential trends:

AI in Testing

AI-augmented software testing will increase in adoption among QA teams. Generative AI decreases the time QA engineers need to design test cases by providing a first draft within seconds and elaborating on it.

AI-based testing instruments can also save QA engineers’ time by creating simple automation scripts, increasing test coverage, and suggesting improvements in test cases.

AI can analyze source code behavior in real time, detecting deviations before they affect end users.

Security Testing

Security remains one of the biggest challenges for everyone maintaining a custom system or running a software business.

According to Accenture’s State of Cybersecurity Resilience 2023 report, 97% of organizations surveyed faced an increased number of cyber threats within the last two years, and those threats have become complex and targeted.

Another alarming fact is that 43% of attacks target small businesses, while only 14% of small businesses are prepared to defend against them.

In these circumstances, ongoing security testing of source code, infrastructure, and processes becomes crucial for an organization’s successful operation.

Shift-Left Testing

The wide adoption of CI/CD, continuous testing, and DevOps best practices has led to a shift left, when source code testing in the pipeline starts as early as possible.

To do this, the team adds testing to each stage.

Source: Global App Testing

Early problem discovery, before defects go to the pre-production stage, helps reduce development costs and increases awareness of specific challenges among project team members.

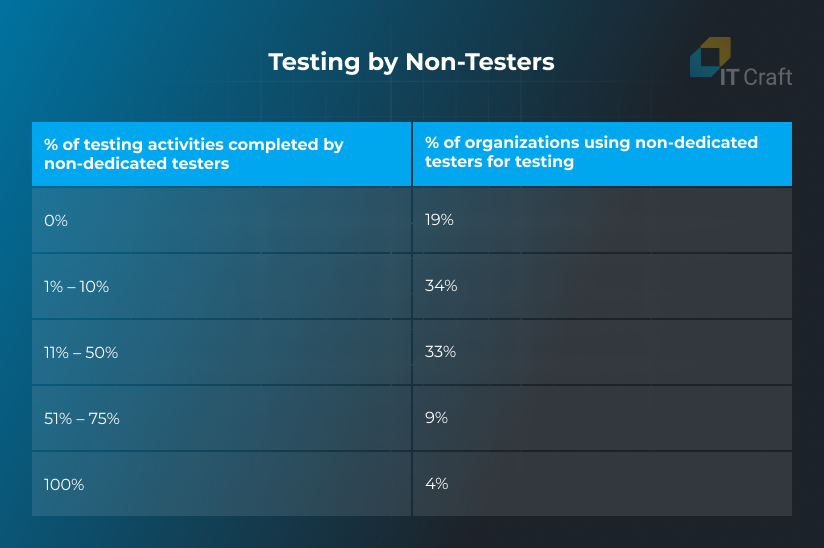

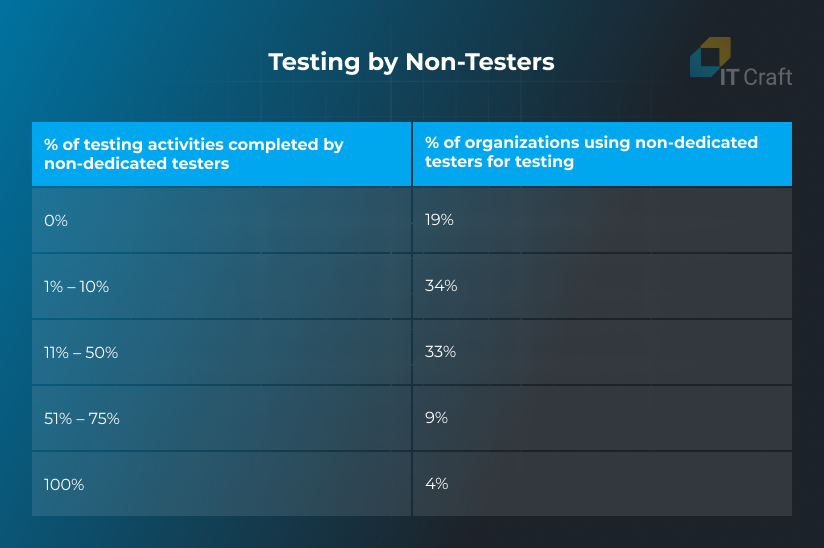

Testing by Non-Testers

An immediate result of left-shift testing is that a large part of software testing is done by dedicated testers and non-testers according to PractiTest’s The 2024 State of Testing Report:

Source: The State of Testing Report by PractiTest

Two-thirds of organizations distribute testing tasks between the testing team and other project team members, such as developers, product owners, support engineers, and even end users.

Such broad collaboration allows for checking software from different angles and reaching QA goals.

Low-Code/No-Code Testing Tools

Low-code and no-code testing tools can significantly boost the workflow. They can help quickly verify concepts or source code without deeply exploring it.

Also, low-code and no-code platforms enable users to work on test automation without prior knowledge of programming languages, which can be useful for manual testers and non-technical team members.

As a result, low-code/no-code tools can support an organization’s efforts in shifting testing to the left.

Performance Engineering

Software performance and stability are critical for a high-end user experience when demand is growing rapidly.

Performance engineers mark the shift of performance testing from the later to early development stages, while the QA/software testing team helps developers design resilient applications from the beginning.

In this way, the project team solves performance issues as soon as possible, saving time and eliminating performance bottlenecks that could harm the user experience.

11

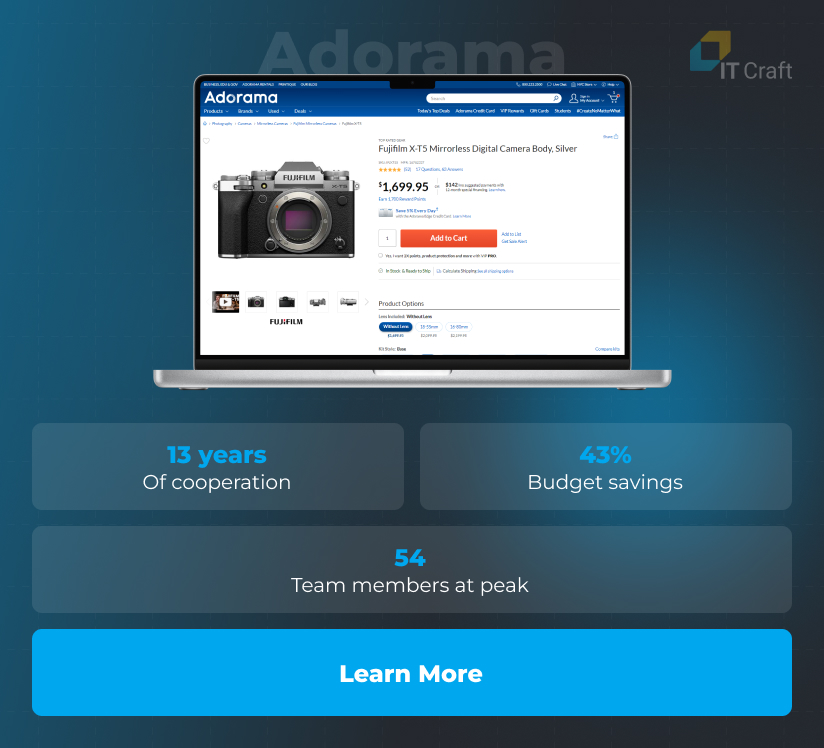

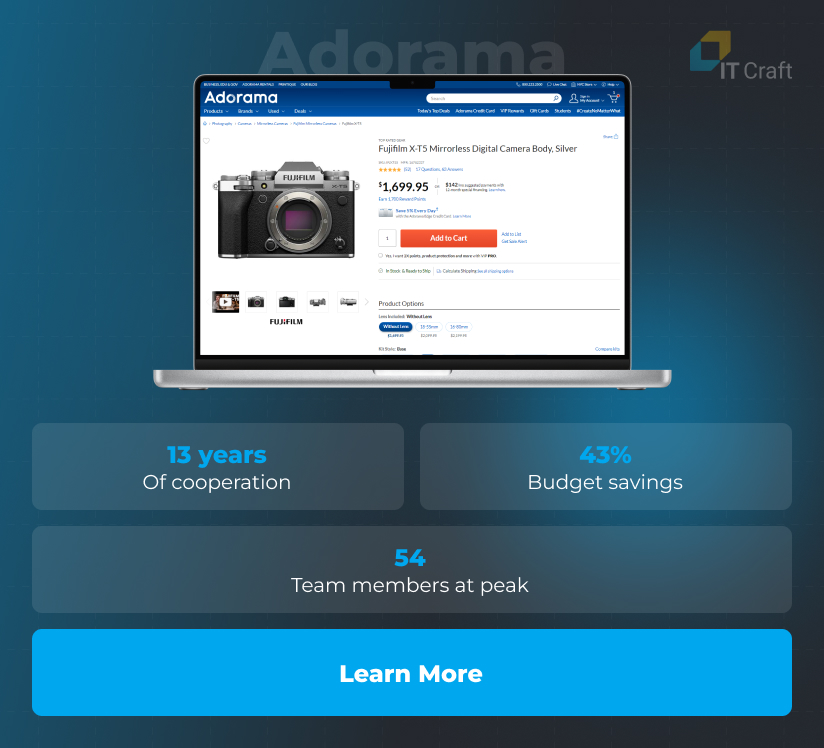

QA and Testing Services at IT Craft: Adorama Case Study

Adorama

A major retailer of photography, audio, video, and leisure equipment wanted to improve and expand their custom ecommerce platform.

To do this, the IT Craft development team took over the technical implementation, working flexibly on both time and materials and fixed-price tasks. We added specialists flexibly and as soon as they were required.

Assigning a dedicated QA and testing team helped facilitate modernization, updates, and expansion activities, ensuring the team reached website download speeds of under three seconds and zero downtime.

!

Conclusion

Correct planning, execution, and feedback on a project’s quality assurance and testing activities lets a business stay above the competition with uncompromised user experiences.

While quality assurance and testing may seem an unnecessary activity for businesses that are unaware of its value, QA helps prevent issues or detect them as soon as possible when they do occur. As a result, the project team delivers software quickly and at a reasonable cost.

Organizations investing in quality assurance face fewer challenges compared to those that cut testing budgets.

A correct workflow is crucial for efficient testing. Depending on a project’s scope and specifics, different approaches can work better than others. If you want to determine which approach works best for your project, it’s wise to consult with professionals on the testing workflow and distribution of responsibilities within the team.